Avatar design for virtual theater is aimed primarily toward expressiveness. It's also important that our actors be able to change avatars and costumes quickly, attach props and use them easily, and move their avatars appropriately.

Another important consideration is server and viewer resources. Every vertex and pixel on an avatar, and every movement, must load into that actor's computer and graphics card, then up into the server hardware and software, then back down into audience computers and graphics cards. Too much data takes too long, so we have to build efficiently – as few vertices and pixels as possible.

Before figuring out how we want our avatars to look, it's first important to know how we make our avatars move. Our actors move their avatars while saying their lines, so that you are hearing and seeing our avatars speaking and moving live. Yes, that takes some practice!

This is how a virtual theater actor moves:

-

System defaults, which vary depending on which grid we're performing in:

-

Speech – face mesh morphs triggered by Talk sound – make the mouth move.

-

Speech gestures (in some grids) - shoulder and arm animations triggered by Talk sound.

-

Default animations built into viewers and grids – walks, stands, turns, sits, etc., triggered by keyboard taps and sequences.

-

Head and eye movement that can be directed by an actor mouse/touchpad-click on another actor's face or other object

-

-

Animation override – devices that use created animation files to override the default avatar movements. These form the basis for much of the character representation – such as the hip movement of a sexy young woman, the bending back and halting steps of an elderly man, or maybe a flying fae creature who raises her arms and spins when she lands.

-

Emote HUD: a scripted HUD that triggers one or more of the 17 default face expressions - mesh morphs - currently available for OpenSim avatars.

-

Animations in props – scripted devices to trigger a movement when a prop, such as a teacup, is attached to the avatar.

-

Animations in sets – scripted devices triggered by an avatar clicking on, walking into or "sitting" on a set piece.

-

Animations in "gestures" - scripted or system files that can run animation sequences, or connect an avatar movement with other scripted effects, such as the Cheshire cat disappearing a piece at a time.

-

Animation files that can be stacked on the screen and triggered one at a time when needed, such as Oedipus kneeling over his dead wife, then standing and holding her chiton pin, then stabbing his eyes with it. In this case the actor triggers the kneel animation by clicking on a scripted set piece, then “stands” from that while attaching the chiton pin, which triggers the hold position for his arm and hand, then he runs the stabbing animation which he had previously stacked on his screen. All while saying his lines.

Once I know what a character is going to need to do, I can, working with the director, start figuring out what he or she or it will look like. I also start thinking about how an actor will use each costume piece and prop, so that they'll display appropriately in Inventory and the “My Outfit” links, and be easy to find.

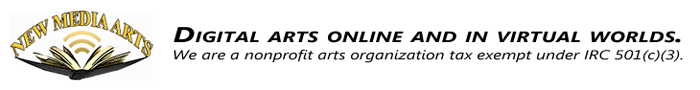

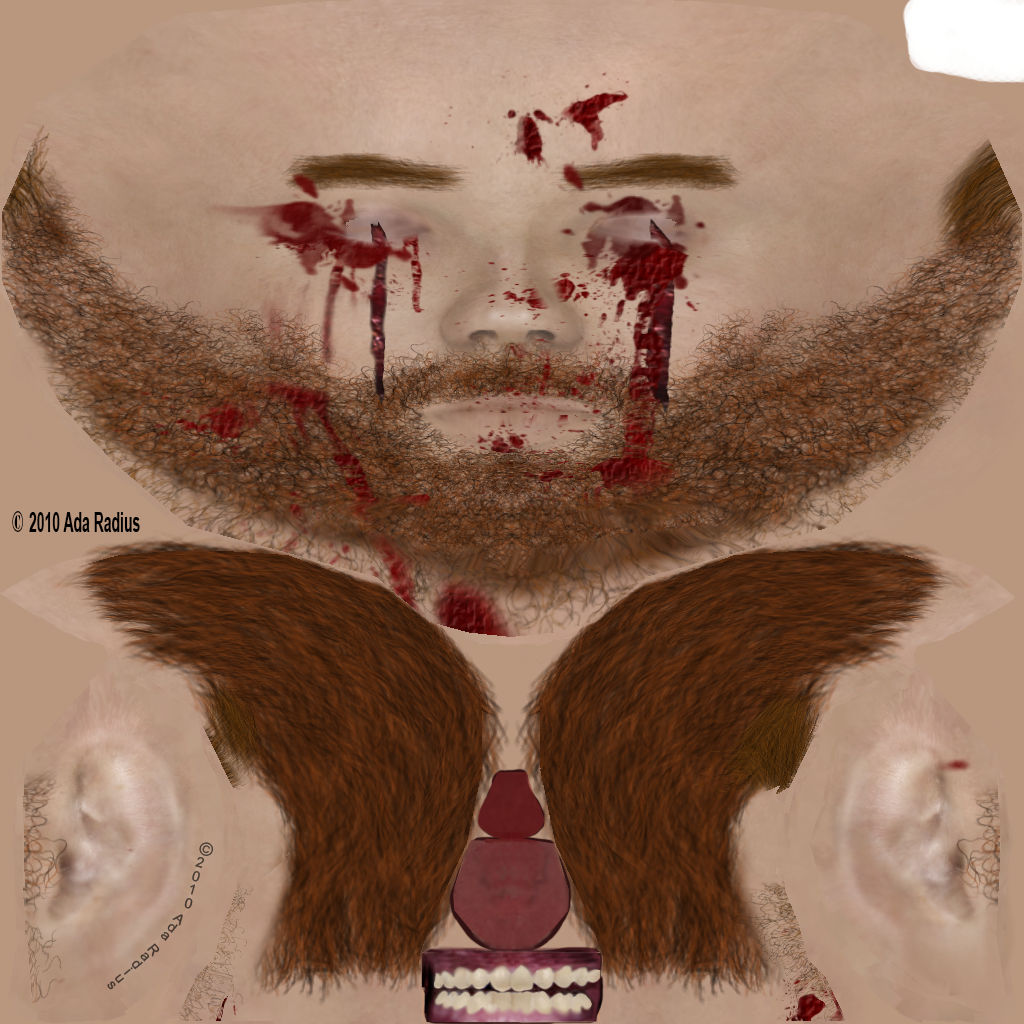

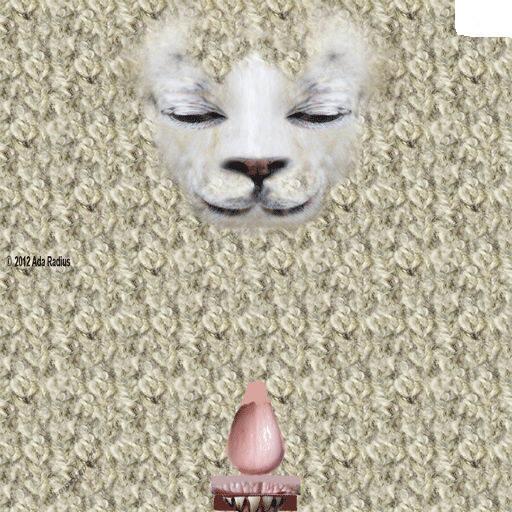

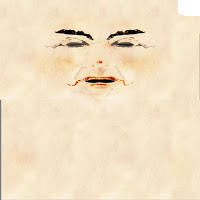

With the simulator, grid and viewer software that is available to us now, we can't make varying mouth movements triggered automatically by Talk if we cover up the default avatar face. We can make a simple “Talk Jaw” - a hinged device, useful for some creatures, but the sound volume doesn't affect the movement. For now, it's usually better, for actors and audience, to use the default avatar face morphs. So I create a “skin” for the default avatar for any parts that will show, i.e., not covered by an attachment – particularly the face. You can see some examples here of human and non-human face textures that will map to the default avatar face. It's similar to the way we see a map of the world on a flat surface, except the avatar body parts here are cut up differently to make flat areas for texturing - ears, teeth and skull separated from the face.

Then I have some decisions to make.

Then I start working on texturing the default avatar pieces that I want visible.

Each component of an avatar's skin – head, torso, hips and legs, eyes, scalp&eyebrows uses a 512x512 pixel image (“texture”), except for eyes, which are 256x256. If you use a bigger texture (1024x1024 is the biggest allowed in the system) it may take longer to load and will be converted to 512x512 on the avatar skin. A smaller texture will also be converted to 512x512 and won't display well. If I know a component of the avatar skin will be completely covered, I'll use my own 4x4 blank texture (the smallest allowed in the system) on that component.

There is a default set of system “clothes” for the default avatar, and they can be very useful – undershirt, underpants, socks, gloves, shirt, pants, skirt, jacket and shoes, each of which is painted onto the avatar shape in order (underpant layer can't be stacked on top of pants, for example). Each of these clothing layers displays a 512x512 pixel texture, same as skin. There is also a tattoo layer which displays just over the avatar “skin”, and an alpha layer that can be designed to selectively make a part of the avatar, including clothing layers, disappear. This is useful under attached costume pieces. All of the system skin and clothing layers move with the avatar animation automatically.

The OpenSim avatar also has 30 attachment points for props and costume pieces. Eyes, ears, chest, etc. More than one attachment can be added to a point, if the actor is careful about setting up attachment order, and so that the additional piece doesn't replace the previous one.

For several years in Second Life and the OpenSim grids, “attachments” were limited to inworld prim pieces. Then sculpt (a pixel-based representation of shape) maps came along, which gave us more shaping options. Sculpt maps are still useful for animating shapes – Medusa's hair, for example. Prim and sculpt attachments are still used quite a lot, but will not “move” with an animation, except to stay attached to the attachment point. Sculpt maps are harder on viewer graphics cards than “mesh” attachments.

“Mesh” attachments are now widely used, now that they're visible in most OpenSim grids, viewers, and audience hardware. “Mesh” is a misnomer, of course – every thing we see in a viewer is “mesh” - vertices and faces, painted with texture images. But for this purpose, the term “mesh” refers to a 3D model, a dae file, that is created in software such as Blender or SketchUp, and uploaded to the grid to use instead of or in addition to the inworld builder tools (“prims”) or “sculpt” maps.

A mesh attachment has a huge advantage over prim and sculpt attachments, because it can be created with better modeling and texturing, and “rigged” to move with the avatar animations. We can upload the default avatar mesh model to Blender, get it dressed, and rig the clothing to the avatar so that it'll move, not perfectly, but recognizably, on the avatar inworld. It doesn't have to be perfect, it's a cartoon.

This is one of the triplets – ugly babies – in the story. I adjusted the default avatar to make the shape, snagged a child skin and mesh eyes I'd made for another show, and deformed the skeleton to make it small with a big head. Then simple mesh jammies with an off-the-shelf texture that I rigged to the avatar skeleton. I ended up combining the deform rig with the jammies – one less thing to wear. One of our troupe members, Rowan Shamroy, created the animations, so they could sit in the grocery cart, fighting and drinking. From that we made NPC characters (NonPlayerCharacters – scripted animated avatar representations), and shot the footage.